Every new building is a gamble on the future, but some are bigger bets than others. These days, one of the highest-stakes wagers campus planners must make is how much to upgrade their high-performance facilities to meet tomorrow’s demand. It isn’t an easy call. Once relegated to distant basements, computer labs are now being built right in the heart of campus, a reflection of their ever-growing importance. With the number of majors that utilize high-performance computing expected to rise nationally by the end of the decade and the growing importance of cutting-edge supercomputers in recruiting the very best scholars, the overall direction is clear. But a lot can go wrong, which makes planning tomorrow’s high- performance computing facilities today very complicated and potentially risky.

Benchmarking Supercomputer Centers

One of the best ways to mitigate the risks is benchmarking, according to Luke Voiland, a Boston-based principal and executive vice president for Practice Strategy at Shepley Bulfinch. For example, Shepley Bulfinch benchmarked collaborative, teaching, and research space to help a leading university plan the facility it aimed to build before the university’s computer science teams outgrew their current homes.

Benchmarking clarified the university’s collaborative space needs for the computer center, in terms of how the institution’s computer-related departments should organize their people, processes, and power systems, says Voiland.

People: Although many institutions prepared to downsize once Covid demonstrated that even large organizations could work remotely, academic needs have not changed much. “We thought there’d be more remote learning or more remote research on university campuses, but I don’t know that that’s really come to fruition,” adds Jeffery Bottomley, a Durham, N.C.-based principal of Shepley Bulfinch.

“It didn’t really shift all that much their desires for types of space or volume of spaces,” says Voiland.

Voiland speculates that the reason there hasn’t been much of a shift is that before lockdown, academics already collaborated online more than people in the private sector. The only area where space demands have declined, says Voiland, is in administration. Several schools have made reductions in their leased administrative space.

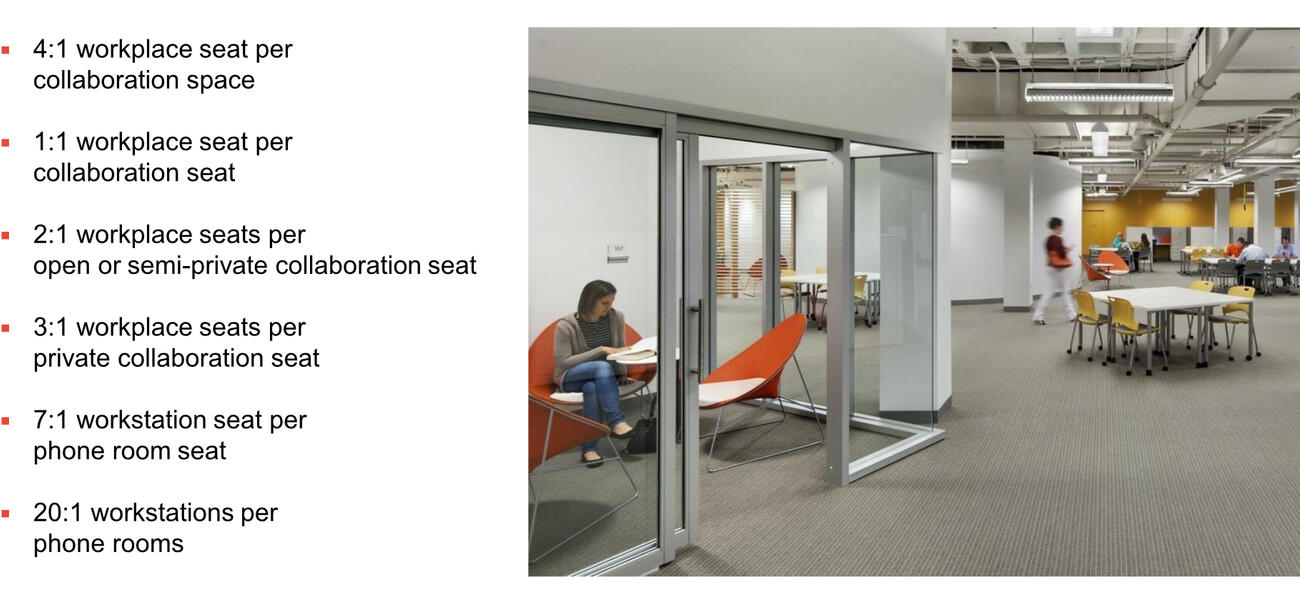

In their benchmarking study, the researchers found that the basic rule for space needs is roughly one permanent seat to one seat in a collaborative space. “From a non-computer science perspective, it seems like they are just sitting and coding all day. That is, of course, part of it, but the rest of the time, they’re collaborating around ideas about what they are going to code and in what fashion and what language to use,” says Voiland.

Most of the benchmarked universities also expect to need more seats overall. Current forecasts suggest that computer science enrollments may rise nationally by as much as 15% in 2030.

Some institutions have also now created dramatic entrances to show off their facilities to visitors and prospective scholars. “Whether it’s a state-of-the-art wet lab or, in this case, a high-powered data center, you’re trying to woo the talent to come do what they do at your school for the benefit of the school. So it behooves you to say, ‘We’ve got the state-of-the-art, purpose-built facility that will provide you all the computing power needed to support your research,’ which will then attract world-class talent to research and educate in support of the university's academic mission for its students,” says Barton Hogge, principal at Affiliate Engineers Inc. in Chapel Hill, N.C.

Processors: Top-end high-performance computers (HPCs) can now perform 10¹⁸ calculations—a cool quintillion—per second. But Hogge says it won’t stop here, with the Exaflop (10¹⁸ Floating Point Operations per Second); he says he and other planners forecast that the community is working on machines that may achieve Zettaflop (1021 calculations per second)—another thousand-fold jump.

As the HPC machines get faster, they are also getting heavier. A single HPC cabinet can now measure 48 by 72 by 96 inches, weigh between 6,000 and 9,000 pounds, and require reinforced floors to operate safely and effectively. “If they are not on the floor where you roll them off the truck, you’re going to need a freight elevator and you’re going to need space that can handle that sort of weight in such a small footprint,” warns Hogge.

Computer scientists and facility designers aren’t the only ones struggling with these giants. Campus financial officers face an equally daunting challenge. Often, says Hogge, the cost of the HPC machines can equal or exceed the cost of the facility itself.

Power: HPCs also require extraordinary amounts of power. A building that houses high-performance computers may use six to eight times the power of a conventional building, says Hogge.

“A modern HPC cabinet can draw as much power as the remainder of the support and people space of the building,” notes Hogge—as much as 400-500 kw per cabinet. That is enough electricity to charge the batteries of eight Teslas in an hour, according to Voiland. Depending on the nature of the research, some HPC components are mission critical and require UPS and generator back-up power. At this scale, that can demand a sizable financial investment and physical area needed to install the power distribution equipment.

People frequently fail to plan for the space they will need for the additional infrastructure, such as more service transformers, hydronic pumps and heat exchangers, and process air cooling units. While most modern HPCs are direct liquid cooled (DLC) to the chip, there is currently still a need for supplemental air cooling, as well, according to Hogge. A modern rule of thumb, says Hogge, is to plan for an 80/20% split of water/air cooling for the HPC machines.

“One common misconception for space planning is to focus only on the room where the cabinets are located, often referred to as ‘white space’ or the ‘machine room,’” says Hogge. “In reality, it often requires double that space just to install the power and cooling infrastructure and up to three or four times the space for the entire facility.

Another potential pitfall in the context of a campus master plan is lack of consideration for exterior space needs and access requirements for utility services and exterior equipment such as transformers, generators, and chillers, according to Hogge. In addition to service vehicles and fire trucks, the facility must be planned to allow for large component deliveries which often results in the need for a multi-bay loading dock area with proper drive aisles for semi-trucks. “There’s a lot beyond the computer space that goes into programming of an HPC data center,” says Hogge.

Nor is this just a matter of adding more items to the checklist. Hogge suggests coordinating the implementation schedule of HPC expansion with increases to the capacity of the power and cooling utilities—a crucial step given some utility equipment may need to be ordered as much as two years ahead of time. “You always have to communicate between the HPC team and the campus facilities team to plan ahead, such that the power and cooling capacity is available before the next HPC machine shows up on site,” says Hogge.

The spaces themselves are also broken down into different sections. For instance, the computer generally ends up in a bright, clean area—the white space. For aesthetic reasons, such spaces tend to be bright and hospital-like, according to Hogge.

While many white spaces are housed in purpose-built facilities, they don’t have to be. Many HPC data centers are integrated into much larger buildings, where the white space is a tiny section of a larger facility.

HVAC, water cooling, and power support are located nearby, in a section of the building referred to as gray space, for obvious reasons. These gray spaces tend to be about the same size as the white space they support, but they typically require much lower investment.

Other support spaces may include a loading dock, storage, a network operations center, and “a visualization lab where your hard work is being visualized. These are very pricey and very cool,” says Hogge.

In the next frontier of high-performance computing, the machines will be faster, with denser internal utility demands, resulting in heavier cabinets that consume less air cooling and more water cooling. This increase in energy consumption will continue to promote more and more opportunities for heat recapture and energy recovery to support decarbonization and resiliency for campus central heating systems.

Voiland and Hogge advise planning ahead to balance the needs for transparency and collaboration with security for asset protection. In addition, the architecture may need to compartmentalize some of the space for classified research in support of certain federal programs.

Red-Hot Snowflakes

The biggest risk a planner faces in conceptualizing the need and costs of a new HPC space is to make assumptions based on the needs of traditional data centers, warns Hogge. Proceeding with a plan without first consulting a technical professional with HPC experience is likely to lead to a frustrating and challenging experience.

Someday it may be possible to use benchmarking metrics for initial cost modeling for HPC data centers, but currently there are too many nuances and variables to reliably forecast the cost with a simple metric such as size or kilowatt energy demand for computing, says Hogge.

The inclusion of a central utility plant, mission critical HPCs, resiliency, people occupancy, or compartmentalized secured areas can all have a tremendous impact on the size and the cost of a facility. “HPC data centers tend to be highly bespoke—they’re each like a snowflake,” says Hogge.

By Bennett Voyles

Learn more about critical metrics and processes for high-intensity facilities at the Tradeline Science and Engineering Facilities 2026 conference. Join us in Nashville in October!